Move from dashboards to decisions

I design analytics that connect model outputs to field actions: what changed, why it matters, and what a service or quality team should do next.

I bridge the gap between raw industrial sensor data and actionable intelligence — designing scalable data pipelines, predictive maintenance models, and AI-powered dashboards for heavy industries.

Impact Snapshot

The common thread across my work is turning messy operational data into systems engineers can trust, maintain, and improve.

I design analytics that connect model outputs to field actions: what changed, why it matters, and what a service or quality team should do next.

I translate equipment behavior into schemas, features, validation rules, and notebooks that data scientists and domain engineers can both reason about.

I work across Japanese and English technical teams, keeping requirements, data definitions, experiments, and deployment constraints aligned.

About Me

I'm an engineer working at the intersection of data, AI, and product reliability — building and supporting predictive maintenance, quality analytics, and design-verification workflows for globally deployed precision instruments in a regulated industry.

At Hitachi High-Technologies, I contribute to fleet-scale AI predictive maintenance development with international R&D partners, drive root-cause analytics on operational telemetry, and act as the bridge between equipment domain knowledge and the data-science teams that turn it into models.

I'm passionate about bridging the OT / IT gap in regulated, mission-critical industries, and I thrive in environments where data engineering and hands-on domain expertise combine to drive real-world reliability and cost outcomes.

What I Bring

Hands-on understanding of operational data from globally deployed precision instruments — failure modes, sensor characteristics, and end-user maintenance workflows.

From raw sensor ingestion (REST/Kafka) to data modeling, cleansing, transformation, and visualization — I own the full pipeline.

Building ML models and AI agents that translate data patterns into actionable maintenance decisions, not just dashboards.

CI/CD-first development with Docker, GitHub Actions, and Azure — building solutions that scale beyond the initial pilot.

Technical Skills

Built around the industrial data & AI engineering lifecycle — from edge sensor to executive dashboard.

Experience

Building industrial intelligence through data engineering and predictive analytics.

Featured Project

Open-source implementation

An end-to-end Python pipeline on NASA's CMAPSS turbofan degradation dataset — strict-schema data loader, feature engineering, baseline and gradient-boosted RUL regressors, with executed Jupyter notebooks showing every result.

Results from the executed notebooks

Every figure below is rendered straight from the executed notebook in the repository — click any card to open the full notebook on GitHub.

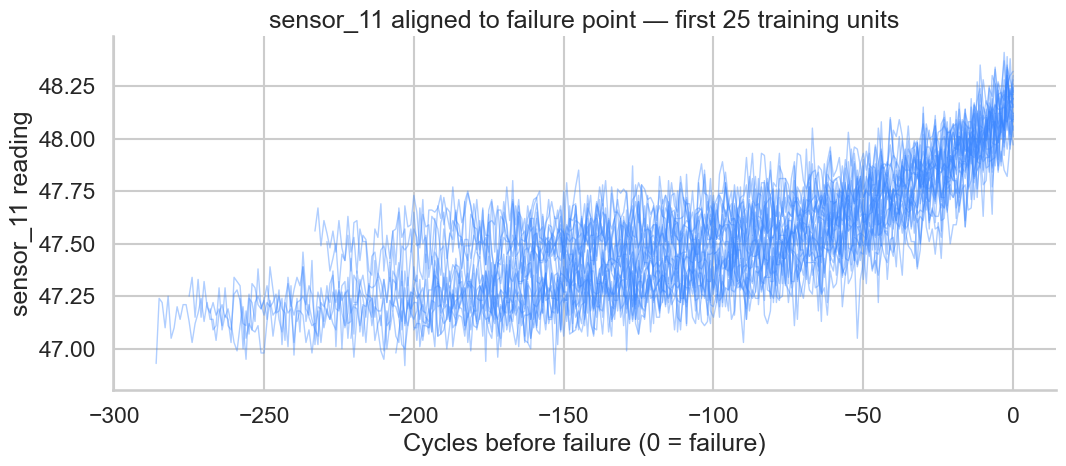

25 training units, sensor 11 plotted vs. cycles before failure. Clean monotonic drift in the last ~80 cycles motivates the piecewise-linear RUL relabelling.

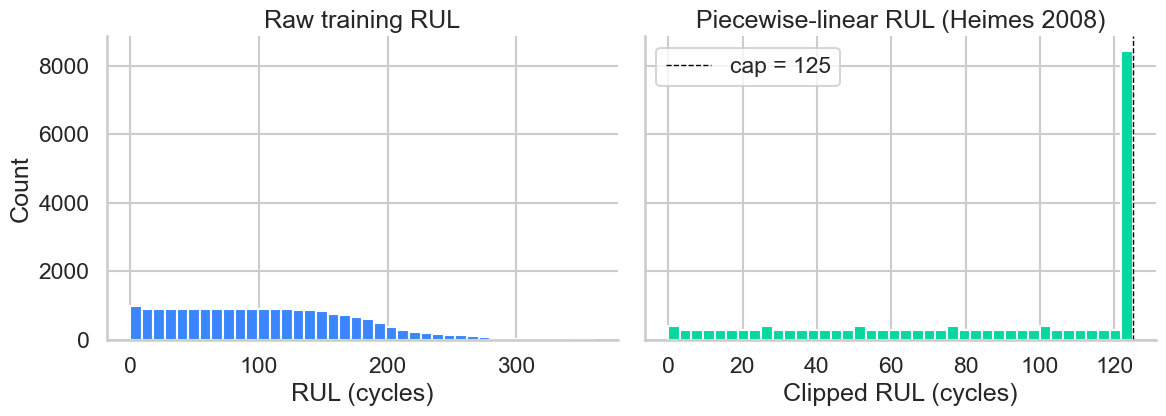

Capping the regression target at 125 cycles concentrates model capacity on the regime where degradation is observable. Heimes (2008) convention.

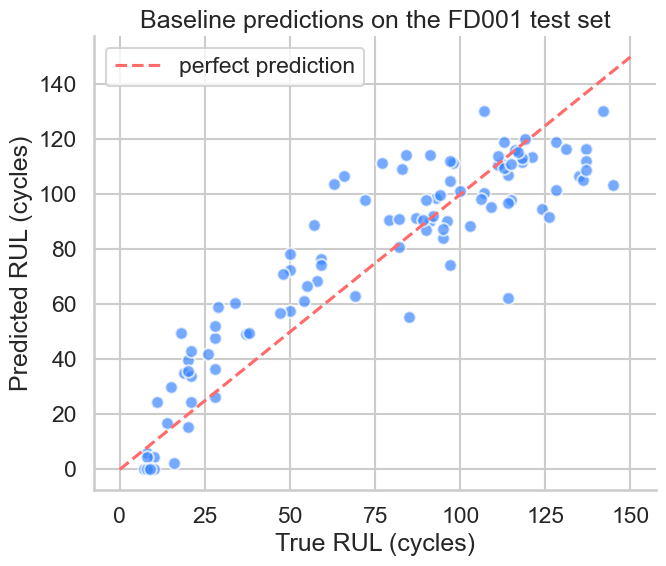

Standard-scaled L2 regression on rolling features. Sets the floor that any non-linear model must clearly beat to justify its complexity.

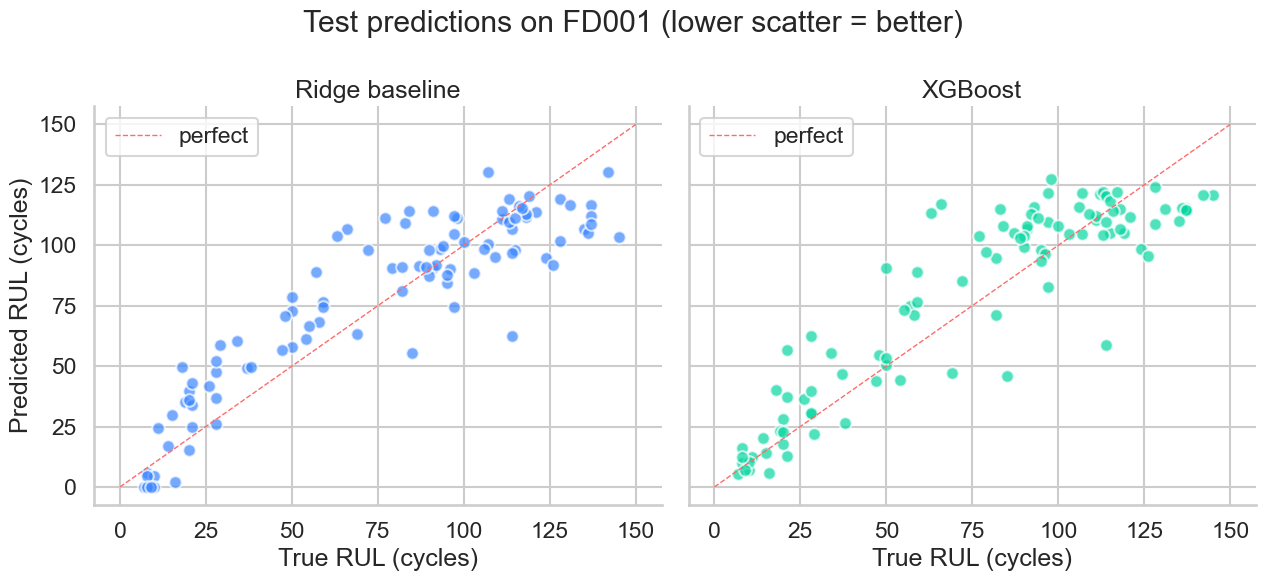

Tuned XGBoost edges out Ridge on RMSE but loses on the asymmetric S-score. The honest result: FD001's single regime is exactly where linear features compete with trees.

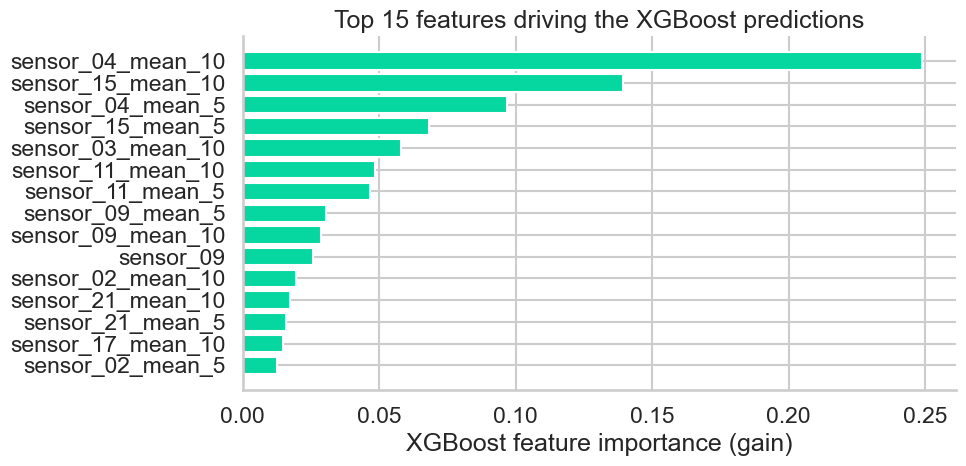

Rolling statistics on high-pressure-compressor sensors dominate — exactly the channels whose drift was visible in the EDA notebook.

Coming next

Operating-regime clustering, regime-aware normalisation, and an LSTM sequence model over full trajectories — the setting where XGBoost is expected to clearly win.

Track progress on GitHub →Interactive concept preview

A self-contained Plotly visualisation of what the same pipeline looks like running against real-time sensor streams. The data is synthetic so the page stays static — for the actual benchmark numbers, see the cards above.

Concept summary

| Sensors | Vibration, Temperature, Pressure |

| Cycles | 300 operating cycles |

| Alert threshold | Health score < 30% |

| Implementation | eastani/predictive-maintenance-cmapss ↗ |

How I Work

A practical workflow for industrial data projects where model quality, domain validity, and deployment constraints all matter.

Start from failure modes, maintenance workflows, and business impact before touching the model. The target is a decision, not a chart.

Define schemas, sensor semantics, validation checks, and reproducible datasets so experiments are explainable and repeatable.

Compare simple baselines, inspect feature behavior, and test whether the result matches equipment physics and field intuition.

Turn notebooks into tested pipelines, dashboards, alerts, and documentation that service, quality, and R&D teams can actually use.

Architecture

End-to-end industrial data flow aligned with Cognite Data Fusion's integration model.

Contact

Open to Data Engineer / Data Scientist opportunities in industrial AI and IIoT platforms. Let's connect.